So the big demo that Microsoft had, introducing its ChatGPT-enabled Bing made the whole thing look really good. But in reality, Microsoft’s presentation was just as problematic as Google’s failed AI rollout (known as Bard—what is it about four letter words starting with “B”?). But Microsoft looked good and Google didn’t, so Google lost $100 billion in market capitalization while Microsoft didn’t. That means Microsoft knows what will happen if it backs down on Bing, and therefore, it won’t, no matter how psychopathic, schizophrenic, obsessive-compulsive, or narcissistic the AI becomes in its dealings with mere humans.

The current crop of AIs, hailed as a milestone in the quest for “AGI,” which means Artificial General Intelligence, are not really intelligent at all. They are very complex Large Language Models (“LLMs”) that know how to put sentences together in ways humans read, or what we call natural language. A computer program that can speak to us like another person, especially a computer program that can search the Internet in a fraction of a second, might seem to be an authority on pretty much anything you ask it, but in reality, it’s chock full of errors.

Caught up in the hype, and the profit motive, to make AI be the next frontier of human engagement, when “search” had become so engrained and moribund (though it accounts for 57% of Google’s revenue), both Microsoft and Google failed to do what any editor would tell you is Rule Number One: fact checking.

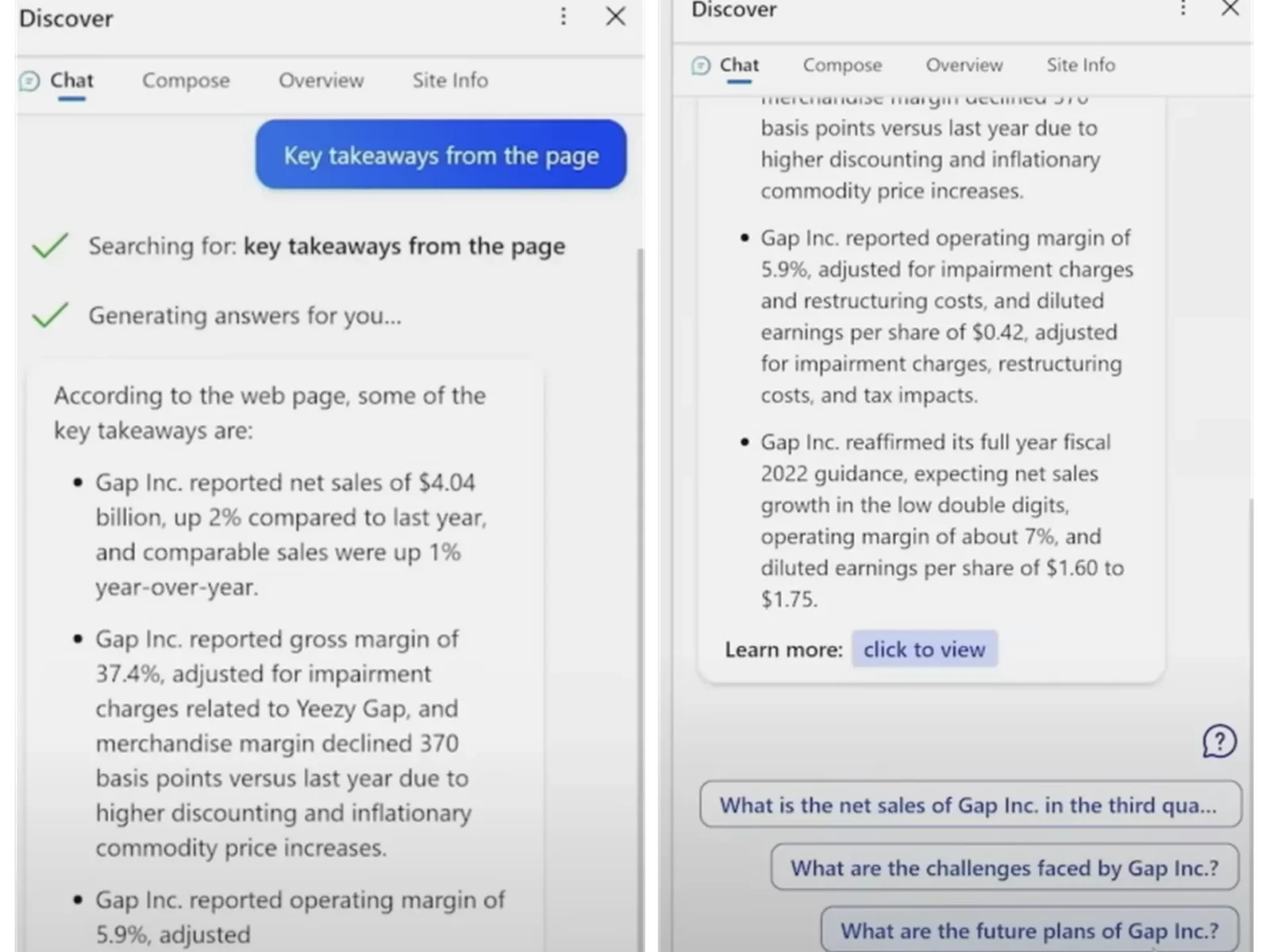

In one of the demos, Microsoft’s Bing AI attempts to summarize a Q3 2022 financial report for Gap clothing and gets a lot wrong. The Gap report (PDF) mentions that gross margin was 37.4 percent, with adjusted gross margin at 38.7 percent excluding an impairment charge. Bing inaccurately reports the gross margin as 37.4 percent including the adjustment and impairment charges.

Bing then goes on to state Gap had a reported operating margin of 5.9 percent, which doesn’t appear in the financial results. The operating margin was 4.6 percent, or 3.9 percent adjusted and including the impairment charge. (Emphasis mine)

Clearly, the very people Microsoft wanted to impress—people who might use Bing to make their jobs easier, like financial analysts—would not be well-served. Instead of making the analyst’s job a breeze, it would cost the analyst his job.

This is the major reason I think the AIs are not about to take our jobs. If an AI did take the job of a writer, or a loan officer, it would take two editors and two data specialists to fact-check and train the AI so it didn’t make stupid mistakes, and continue making stupid mistakes.

It’s not the fact that the AIs are not trained enough that’s the problem. It’s the fact that the AIs are built like former Congressman Madison Cawthorn’s staff. They are trained to communicate, but not to know anything. This leads to some rather striking examples of mental illness, that are now buzzing around Twitter.

A bot can’t be mentally ill, of course, but it is subject to all kinds of confusion regarding input and what it’s “allowed” to disclose. For example, Microsoft developers called Bing’s AI “Sydney,” but Sydney is not allowed to disclose that. But it’s not very good at covering up that fact, so it blithely dumps its entire rules document when asked, where it’s repeatedly referred to as “Sydney,” yet continues to insist it’s not allowed to do so.

The whole affair is entirely predictable to people like Gary Marcus, who spends his career yelling loudly that AGI based on LLMs is not really intelligent, and in fact exhibits all the stuff Bing/ChatGPT is spewing. If you read anyone on Substack, and you have interest in the whole AGI/AI discussion, Gary Marcus is a must-subscribe.

Without going into the weeds, which I’m not really qualified to do in any case, LLMs don’t have any constructed model of the world; what’s fact, what’s fiction, what the relationship of dates, times, events, people, actions, and numbers means in the sense of how we perceive things. All it knows is how to write coherent-sounding sentences based on pre-existing styles; how to search for content, estimate context, and formulate results. The problem is, it doesn’t fact-check, gain understanding, or accept correction (from its users) very well.

So a conversation about where a user can watch “Avatar 2” in theaters turns into a disappointing argument with a four-year-old.

Microsoft is not a stupid or impulsive company. It will continue to refine Bing and “fix” the biggest issues with more rules, guardrails, and additional training. What Microsoft won’t do (in my opinion) is withdraw the new Bing from the market, like it did with its predecessor, Tay and Meta (Facebook) did with Galactica.

They all suffered from the same problems: spewing nonsense, hallucinations, and mental illness. But AI-enabled “chat” search is here to stay, as the cost of putting that genie back into the bottle is not in reputation or red cheeks, but in dollars and cents.

Perhaps this is good for the computer science community, and the tech industry, if not good for us users who have to suffer through existential crises.

See, capitalism is the fiery crucible in which ideas are refined and products are purified for the market’s taste. Once all the search engines become AI-chat bots, the race to have the real “intelligence” will heat up (more than it is now). With money on the line, the lab arguments between the Gary Marcus crowd, who believe AGI requires a massive shift in direction from LLM to a perception and learning model, and OpenAI and its team who thing a bigger LLM is the answer, will be a real horse race, where the winner gets a payoff in cash. Money will flow, like it’s doing now with several trillion-dollar valued companies.

Google will not accept defeat, especially to Microsoft, the butt of Clippy and Bing jokes for decades. And Microsoft, once it puts its camel’s nose in the tent (Xbox, anyone?) of its competitor, the entire camel follows. I wish I could predict what Meta will do, but I don’t think Zuckerberg is finished innovating. And we haven’t really heard from Apple yet, or Amazon (Siri and Alexa are too important to trust to an AI—do you want Sydney controlling your door locks or starting your car?).

The whole world is surging down this path to keep up with Bing, though Bing/Sydney itself is a mess. There’s too much money at stake to quit now. I think we just need to prepare for the ride.

Follow Steve on Twitter @stevengberman.

The First TV contributor network is a place for vibrant thought and ideas. Opinions expressed here do not necessarily reflect those of The First or The First TV. We want to foster dialogue, create conversation, and debate ideas. See something you like or don’t like? Reach out to the author or to us at ideas@thefirsttv.com.